I’ve been working on a screen-space solution for blurring shadows that has the following features :

- Variable-sized kernel based on surface distance and view angle

- Rejection of samples if they lie on a non-contiguous surface, by identifying depth and normal discontinuities

- Bilateral, two-pass blur filter to maximize the possible kernel size

- Vertex and pixel shader 3.0, so no worry about 64 instructions in the PS

…but also works with the following constraints, to simplify matters :

- Flat surfaces only, no curves! (to simplify the sample rejection process)

- Full control over the rendering pipeline, so the “main render” pass can output weird values in order to be properly blurred

I quickly realized that it’s very hard or impossible to do a variable-sized Gaussian filter in real-time. If you change the kernel size, you change the standard deviation, and you need to recalculate the weights… this is too heavy for a pixel shader. So I chose to use a box filter with uniformly-spaced samples.

I totally would’ve liked to use Poisson-Disk distribution, but it’s not doable in a two-pass bilateral scenario. And you can’t achieve big kernels in real-time without separating the process in two passes.

My XNA3 implementation currently uses no additional render targets (!) but just a “resolve texture”, and resolves from the main buffer quite a lot. A R32F (Single) render target is used for the shadowmapping process itself, but otherwise everything’s done with a A8R8G8B8 (Color) main buffer.

The shadowmapping solution is standard orthographic/directional depth testing, but I’m using Exponential Shadow Maps (ESM) to simplify the depth biasing problems. I could never get my hands on a really good way to do Slope-Scale Biasing for standard depth testing, so ESM saves the day here. Otherwise, there’s no fancy cascading, splitting or projection tricks.

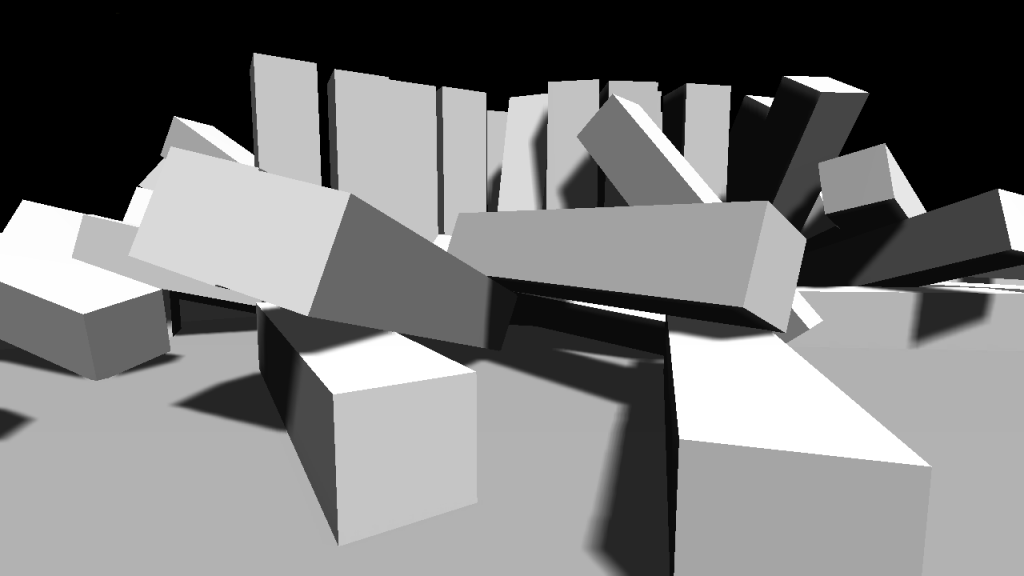

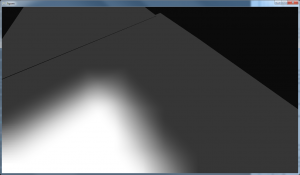

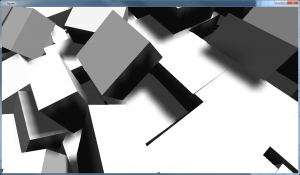

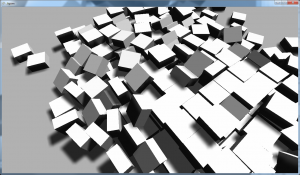

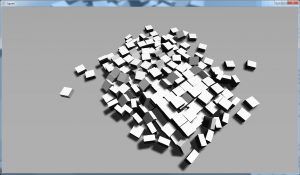

Here’s how it looks at different distances. The blur kernel stays approximately the same size in world-space even if the whole process is screen-space!

I’ll keep working on a clean sample to show, and I’ll definitely release the HLSL code if I can’t release the source to the whole thing. Stay tuned!